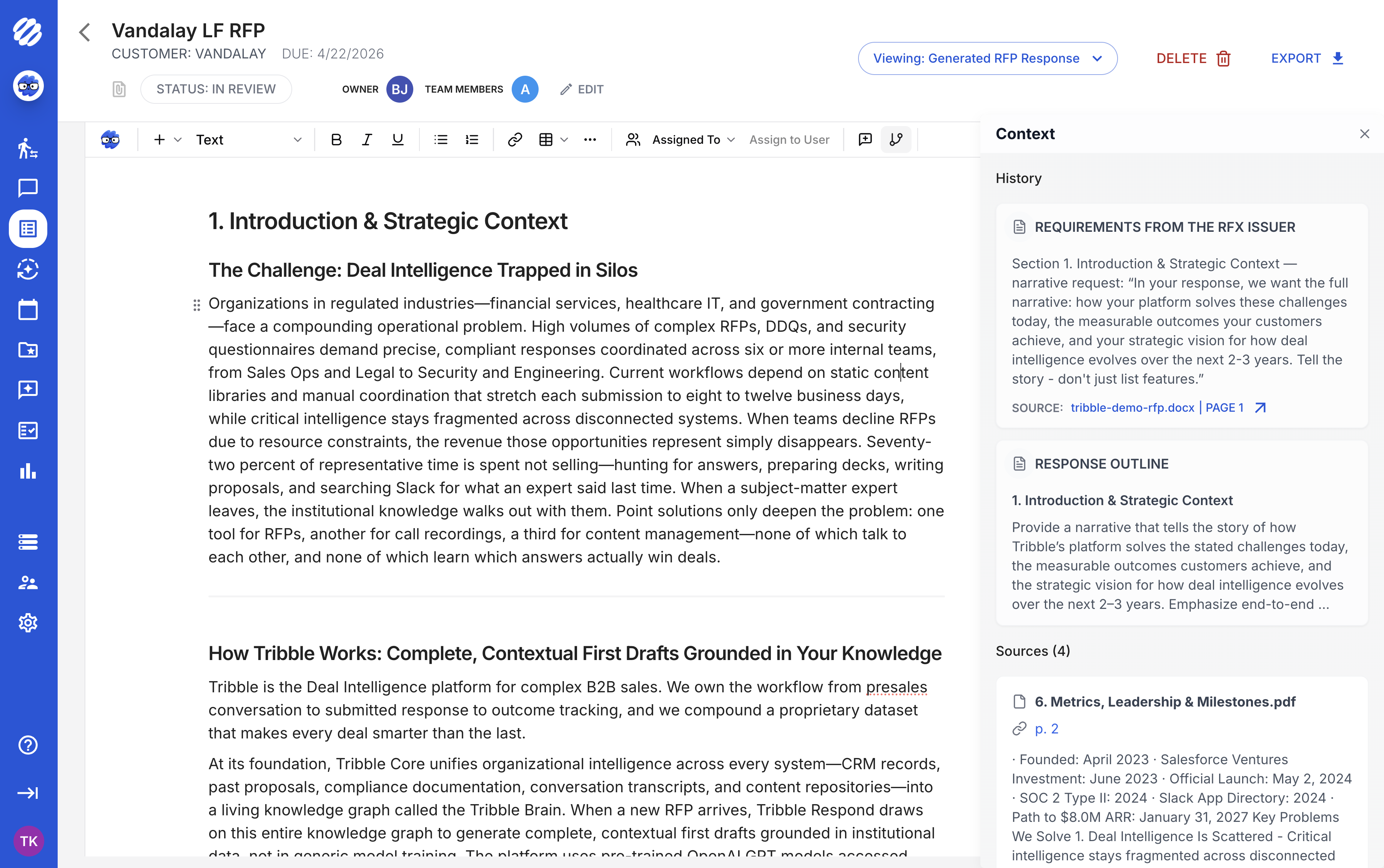

Will the AI just paste old answers like our current tool?

No. Tribble generates new answers grounded in your current sources. It uses past wins as context, not as a clipboard. If a policy was updated last week, your next proposal reflects that automatically.

What if the AI is wrong?

Every answer has a confidence score. Low-confidence answers are flagged and routed to the right SME before they reach the buyer. Your team always has final approval. Nothing goes out without a human sign-off.

How long does it take to go live?

Implementation depends on your source systems, content quality, review workflow, and questionnaire formats. Existing content libraries can become part of the knowledge foundation.

Can our SMEs review without leaving Slack?

Yes. Expert review requests arrive in Slack or Microsoft Teams with the question, draft answer, sources, and deadline. SMEs approve, edit, or escalate without switching tools.

How should teams evaluate AI proposal automation software?

Evaluate whether the system maps every requirement, drafts from approved sources, cites evidence, scores confidence, routes exceptions to SMEs, preserves review history, exports cleanly, and learns from approved responses and outcomes.

When is Tribble a better fit than RFP project-management software?

Tribble is a better fit when the core problem is response quality, evidence, reviewer ownership, buyer context, and answer reuse across sales and security workflows, not just assignment tracking.

How does AI proposal automation help teams write to win?

It connects each buyer requirement to approved proof, deal context, win themes, SME review, and prior outcomes so the response is accurate, differentiated, and defensible.